I spent my third year of the BUT MMI program tinkering with a problem that has been stuck in my head for a while: how can I map real dancers onto avatars in a virtual stage without drowning in middleware or vendor lock‑in? The dance gala in Toulon was coming up with a Paris 2024 Olympic Games theme, and I wanted something more vibrant than canned motion capture. This project documents how I wired Ultralytics’ YOLOv8 pose model straight into Unity, so that performers could walk on stage and see their motion bloom into stylised athletes in real time.

Why I built it

For the AR/VR interaction course, most student demos revolved around hand‑crafted animations or controller inputs. I wanted live bodies. Cameras already feed rich pose data to machine‑learning models; the missing bit was translating those skeletons into the language Unity understands. Instead of buying a licence for a commercial bridge, I wrote my own Python wrapper with a UDP protocol to stream keypoints to the game engine. That gave me full control over filtering, tracking, and how many bodies we could support simultaneously during the gala.

Architecture at a glance

- Capture & inference. OpenCV captures frames and resizes them before forwarding them to the YOLO detector.

- Pose parsing. I normalise the keypoints, build a per‑person payload, and optionally run a smoothing filter.

- Networking. A lightweight UDP client sends JSON over localhost to the Unity listener.

- Visualisation. Unity scenes read the stream and drive articulation rigs representing each detected performer.

Python tooling

The command‑line entry point (pywui) needed to be flexible enough for rehearsals and showtime. It accepts arguments for the video source, model, detection mode, plotting, logging, GPU usage, and whether to filter the signal. I added dedicated switches for the BOTSort tracker when persistence was necessary. The script auto‑selects the correct OpenCV backend depending on whether it runs on Windows or a Unix system so we could develop on our laptops and deploy from the theatre booth without friction.

At runtime the main loop reads a frame, halves its resolution to keep latency low, and routes it through the YOLO stack. Results go straight into the UDP socket as JSON, while an optional window displays the detections for debugging. When Unity sends any messages back—useful while tuning avatars—the same socket reads them without blocking the send loop.

Pose processing & filtering

The Yolo wrapper class hides most of the Ultralytics boilerplate. It initialises the model, binds it to CPU or GPU, and tracks per‑person histories so I can run temporal filters when needed. Depending on the rehearsal, I could run the raw predict mode or enable track with BOTSort for more stable IDs when dancers crossed paths.

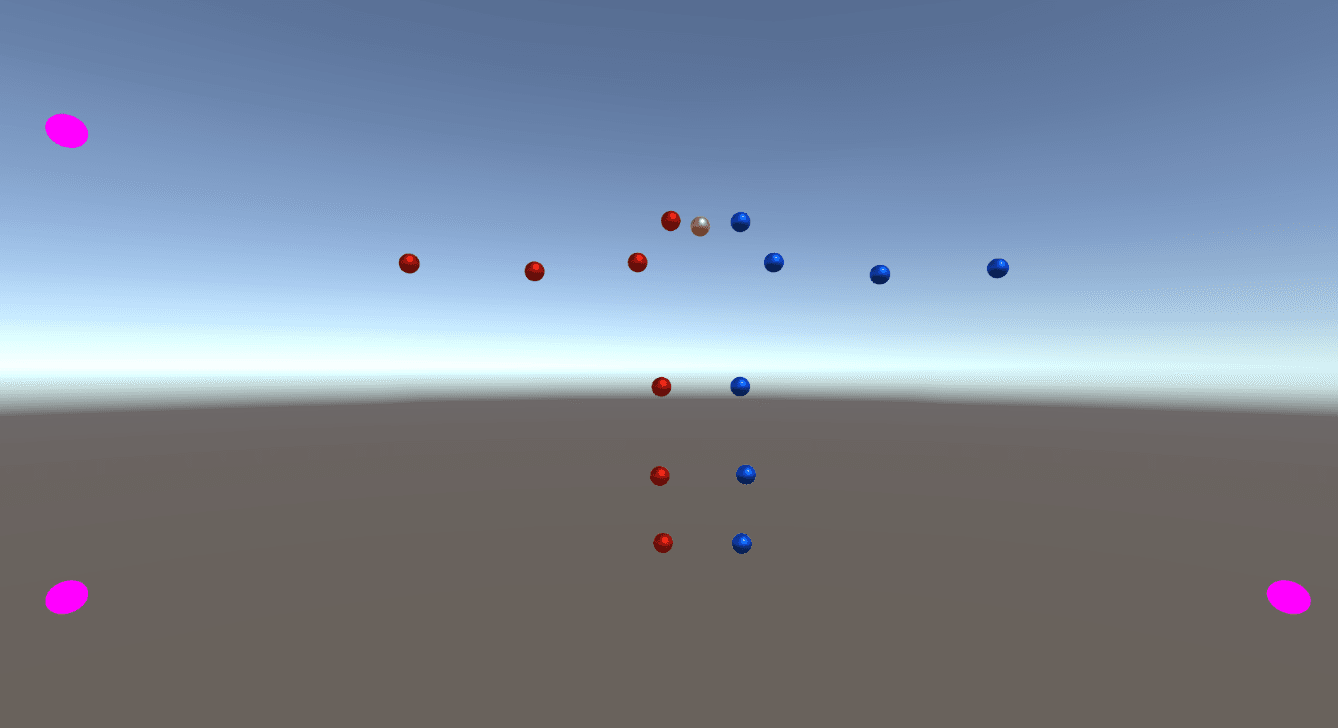

Parsing the network output required more than just collecting keypoints. I use the normalised coordinates (xyn) so Unity receives values independent of the camera resolution, derive helper joints like shoulder and hip midpoints, and flag whether each skeleton is valid enough to drive an avatar. Each packet therefore contains wrists, elbows, knees, ankles, facial points, and a synthetic inter_leg_position to stabilise the avatar’s root.

When the stage lighting or camera jitter created noisy coordinates, I leaned on a simple moving average. The wrapper keeps a rolling window per dancer, spawns a thread to compute the filtered output, and stores both raw and filtered histories. The plotting utility can compare both streams live or save a PNG after a rehearsal so we can check how much lag the filter introduced.

Utility helpers—distance, angles, body side detection, head inclination—live in utils.py. They came in handy for experimental gestures, like triggering effects when a dancer tilted their head or leaned left. Even when those features didn’t make it to the gala, they’re ready for future iterations.

UDP bridge with Unity

For networking I adapted Siliconifier’s open-source UDP helper. It creates a bidirectional socket, handles background receive threads, and gracefully reports issues when Unity hasn’t connected yet. The simplicity of UDP let me broadcast updates every frame without negotiating connections; if a packet drops, the next one arrives a few milliseconds later.

On the Unity side (not shown in this repo) I mapped the JSON payload to Mecanim rigs. Each detected person spawns a prefab with a labelled set of joints. As soon as a new ID appears, Unity instantiates a new skeleton, and when a dancer walks off stage, I reuse the slot. During the gala we projected multiple avatars styled after Olympic disciplines—gymnastics, fencing, athletics—and their motion stayed tethered to the live performers thanks to this stream.

Lessons from the gala

- Latency budgets matter. Halving the frame size and trimming the payload kept round‑trip latency under ~120 ms on modest hardware, which was acceptable for the dancers.

- Robustness beats cleverness. UDP is unreliable on paper, but in practice it was the least intrusive option for the theatre network. I added plenty of logging hooks so we could diagnose issues during rehearsals.

- Human factors count. The filtering window is tweakable because every dancer moves differently; choreographers appreciated being able to trade responsiveness for stability per routine.

Roadmap

The show proved the concept, but there are still ideas on my list:

- Replace the moving average with a Butterworth or Kalman filter to better handle quick spins.

- Package the Python side into a Docker image so technicians can launch it with one command before performances.

- Extend the Unity integration with inverse kinematics to drive full-body avatars instead of partial rigs.

- Use the helper metrics (angles, head tilt) to trigger VFX cues tied to choreography.

Closing thoughts

Building this pipeline was my way of blending creative coding with live performance. From the first OpenCV frame to seeing a virtual gymnast mimic a dancer in Toulon, the project reminded me why I love working at the intersection of media and technology. Now that the core bridge is solid, I can keep iterating—new models, richer avatars, more immersive stages.